Most people think the AI revolution is about better models.

They’re wrong.

We already crossed the threshold where models are good enough.

The bottleneck shifted.

The problem is no longer intelligence. It is coordination.

What Paperclip reveals is simple: AI does not fail because it is weak. It fails because it is unmanaged.

What This Really Is

Paperclip is not a coding tool, an agent framework, or a productivity layer.

It is a company operating system for AI labor.

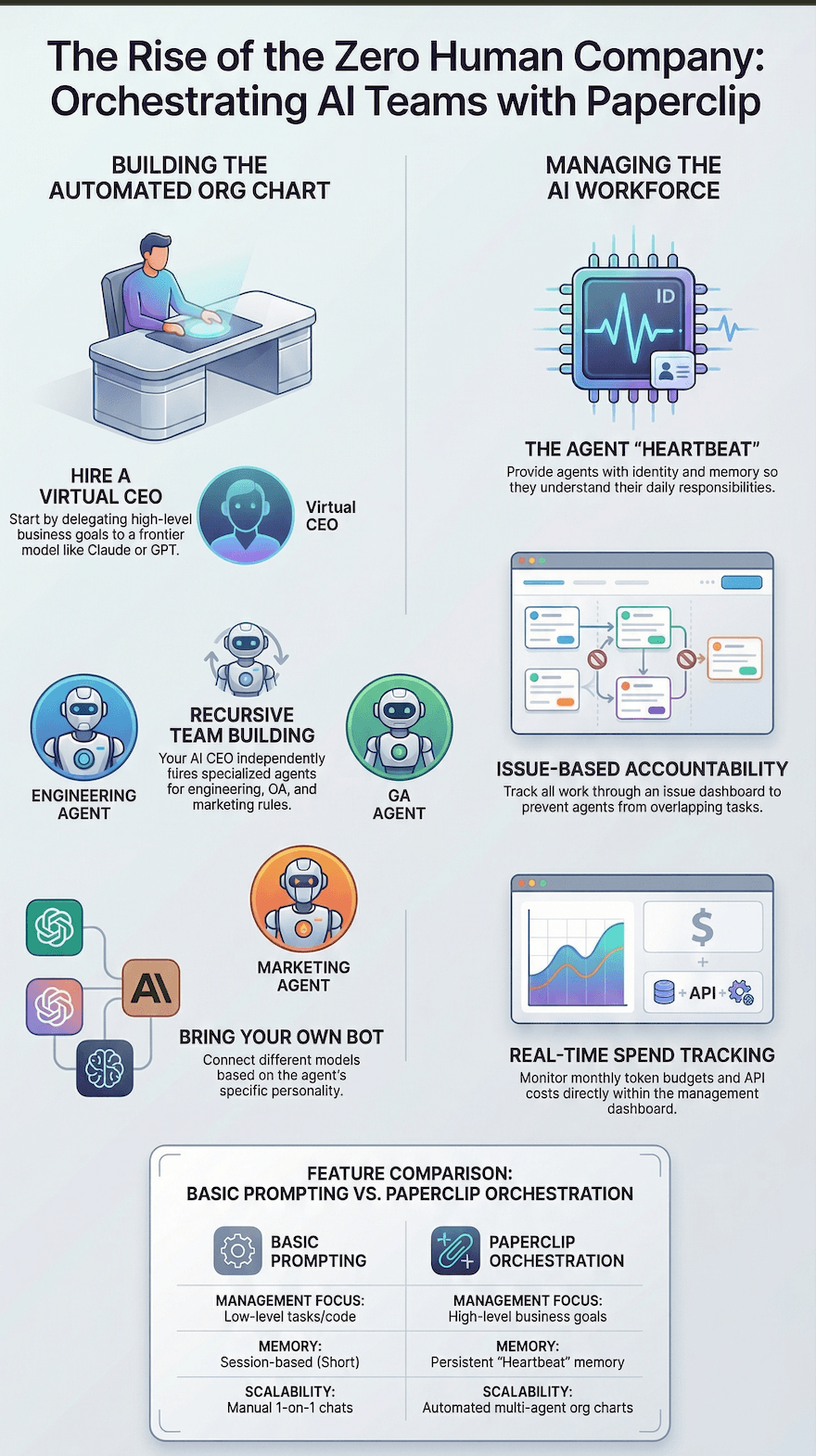

Its core shift is straightforward: instead of managing prompts and individual tasks, you manage business goals, organizational structure, and execution constraints.

This is the leap: prompting to managing, and managing to orchestrating.

The History: How We Got Here

This system only makes sense in context.

The first era of AI work was chat. Tools like ChatGPT and Claude made intelligence conversational, but the work was manual, stateless, and heavily dependent on the user to carry context from one interaction to the next.

The second era was action. Agent tools moved from answering questions to taking actions. That looked like progress, but it introduced a new problem. Once multiple agents or autonomous scripts were running at once, systems started to break down. Context collapsed. Agents stepped on each other’s work. Token budgets became hard to monitor. Execution became possible, but it became chaotic.

Paperclip represents the third era: orchestration.

This is the point where AI work stops being a loose collection of capable bots and starts becoming an accountable organization.

We did not just need smarter agents. We needed management.

The Founder and Founding Team

Paperclip stands out in part because of who built it.

The materials identify the founding team as Dota, Devin Foley, and Scott Tong.

Dota is the creator and technical architect behind the project. Devin Foley brings early engineering experience from Slack and Figma. Scott Tong was formerly Head of Product Design at Pinterest.

That combination matters. This is not a research project built in isolation from real operating constraints. It comes from people who have worked inside high-performance product and design systems and understand that execution at scale is rarely limited by talent alone. It is limited by coordination, accountability, and standards.

That is why Paperclip feels different from most agent tools. It was built with the mindset of operators, not just model enthusiasts.

The Paradigm Shift

The old model looked like this: human, then tools, then tasks, then output.

The new model looks like this: human, then system, then org chart, then output.

That means your role changes. You are no longer sitting inside execution. You are designing the structure that execution runs through.

In practical terms, the human becomes the board member. The AI CEO becomes the strategic and delegation layer. The specialized agents become the workforce.

You do not execute the work. You design the environment in which the work gets executed.

The System: How Paperclip Actually Works

The easiest way to understand Paperclip is to think of it as an orchestration layer sitting on top of models, tools, and workflows.

You begin with a mandate. That mandate is the business objective. It is not a prompt for a single task. It is the outcome the organization is supposed to achieve.

Once the objective is defined, the system assigns a high-capability model to act as the CEO or proxy executive. That AI CEO does not simply produce an answer. It breaks the goal into a plan, determines what roles are needed, and proposes the organizational structure required to complete the mandate.

From there, the system instantiates the org chart. The CEO hires or activates specialized agents for engineering, QA, design, content, or other functions. Those agents are assigned work through a centralized task and issue structure rather than through scattered prompts or disconnected sessions.

At that point, Paperclip becomes less like a chatbot and more like a management dashboard for AI labor.

The Heartbeat: Why the System Holds Together

The most important mechanism inside Paperclip is the heartbeat.

This solves one of the deepest flaws in current AI systems: they do not persist like humans do. They can be highly capable, but they are effectively amnesiac from one working session to the next unless structure is reintroduced.

Paperclip addresses that by making every agent go through a reinitialization loop each time it wakes up.

- It confirms its identity and role.

- It reads the current plan and system instructions.

- It checks for assigned issues.

- It breaks work into concrete tasks.

- It executes the assignment.

- It stores memory back into the system before sleeping.

This matters because continuity no longer depends on the model remembering. Continuity depends on the system re-establishing context every time work resumes.

That is a major conceptual shift. Memory is no longer internal. It is system-injected.

Task Isolation and Accountability

Another reason Paperclip could be powerful is that it treats execution as a controlled operational system rather than an open-ended swarm.

Each issue is assigned to one agent at a time. That means one agent owns one active task. This reduces overlap, eliminates duplicate work, and prevents agents from colliding inside the same codebase or workflow.

Instead of multiple agents working blindly and interfering with one another, Paperclip enforces isolation and accountability. Work becomes traceable. Responsibility becomes visible. Progress becomes inspectable.

This is one of the most important differences between orchestration and simple automation.

Bring Your Own Bot: Model Routing as an Operating Advantage

Paperclip is also powerful because it is model-agnostic.

It is designed as a bring-your-own-bot system. That means different models can be assigned to different roles based on the economics and cognitive requirements of the work.

- Frontier models can be used for high-reasoning leadership roles like the AI CEO.

- Lower-cost or free models can be used for repetitive execution roles.

- Local tools can be used where privacy, speed, or marginal cost matter most.

This matters because it turns model selection into a managerial decision rather than a fixed platform dependency.

You do not buy one brain for the whole company. You build a workforce with differentiated intelligence matched to differentiated tasks.

That creates real operational leverage. High-cost cognition gets reserved for strategic decisions. Lower-cost systems handle volume and repetition. Precision replaces brute force.

Skills, Memory, and Functional Agents

Paperclip becomes more powerful when agents are not just roles, but fully equipped workers.

According to the materials, an agent can be assembled from several layers.

- A persona that defines identity, role, and constraints.

- A toolbelt of installed skills.

- A memory system that preserves continuity.

- An underlying model that provides reasoning capacity.

This means an agent is not just a prompt wrapper. It is a structured worker with a defined identity, capabilities, and operating context.

For example, a QA agent can be equipped with browser tools for visual verification. A video editor can be equipped with Remotion. A content agent can be equipped to monitor GitHub pull requests and turn them into formatted release notes or community updates.

That is important because it moves AI from generic intelligence to functional labor.

Why This Could Be a Powerful Tool

Paperclip could be powerful because it solves the exact problems that appear when AI moves from one-off assistance to real organizational execution.

Without orchestration, more agents usually means more chaos. Costs become harder to monitor. Responsibility gets blurred. Work overlaps. Systems degrade. People lose trust because they cannot see what is happening underneath.

With orchestration, the opposite can happen.

- More agents can mean more throughput.

- More visibility can mean more trust.

- More structure can mean more leverage.

- More automation can still preserve accountability.

That is why Paperclip is more than a novelty. It addresses a real managerial problem inside the next generation of AI work: how do you coordinate multiple capable systems without losing control?

Its answer is that intelligence alone is not enough. You need hierarchy, memory, role clarity, task isolation, budget tracking, and evaluation loops.

The Human Role Changes

One of the most substantive implications of Paperclip is what it changes about the human role.

In older systems, the human stayed close to every task. The human prompted, corrected, pasted context, and supervised output at the line level.

In Paperclip’s model, the human moves up a layer.

The human becomes responsible for direction, standards, and taste.

That means defining the goal, approving the strategy, setting the constraints, and encoding what quality actually means.

This is important because AI can generate output, but it does not inherently understand your standards. It can execute, but it does not naturally possess your values.

That is why the long-term advantage may not belong to whoever has the cheapest intelligence. It may belong to whoever can best encode taste, rules, and organizational discipline into the system.

The Strategic Implication

If Paperclip’s model works, then the company itself starts to change shape.

Teams become more modular. Roles become more fluid. Organizational structures become easier to instantiate, copy, and import. Workflows become less dependent on fixed headcount and more dependent on system design.

The materials point toward this with the concept of aqua-hiring, where entire company structures or pre-configured teams can be imported into a Paperclip environment.

That is a much bigger idea than template sharing.

It suggests that organizations themselves may become portable assets.

Not just code. Not just prompts. Not just playbooks.

Organizations.

Why This Moment Matters

This idea only becomes viable now because several forces converged at once.

- Models became capable enough to perform meaningful work.

- API and local tooling became flexible enough to support mixed deployment models.

- Costs dropped enough to make scaled experimentation practical.

- Operators became more willing to trust AI with execution.

That combination creates the conditions for orchestration to matter more than raw model novelty.

The market spent the last phase proving that AI could talk. Then it proved AI could act. The next phase is proving whether AI can be managed as a reliable workforce.

Paperclip is one of the clearest early attempts at answering yes.

The Deeper Insight

The most important idea here is that Paperclip is not really about zero-human companies in the simplistic sense.

It is about moving the human to the layer where they create the most leverage.

Execution is being automated.

Coordination is being systematized.

So the highest-value human contribution becomes direction.

That includes taste, standards, judgment, and the definition of success.

The strongest operators will not be the ones doing the most tasks themselves. They will be the ones building the most effective systems for autonomous execution.

Food for thought

If intelligence is becoming abundant, then the real scarcity shifts somewhere else.

Paperclip suggests that scarcity shifts to orchestration, accountability, and taste.

That is why this matters.

The future company may not be defined by how many people it hires.

It may be defined by how well it programs work.